Everyone Is Eager to Install and Use OpenClaw — Why Won't Enterprises Use It?

Recently, the explosive popularity of OpenClaw has sparked a buzzword across the AI community: “truly capable of doing the work.”

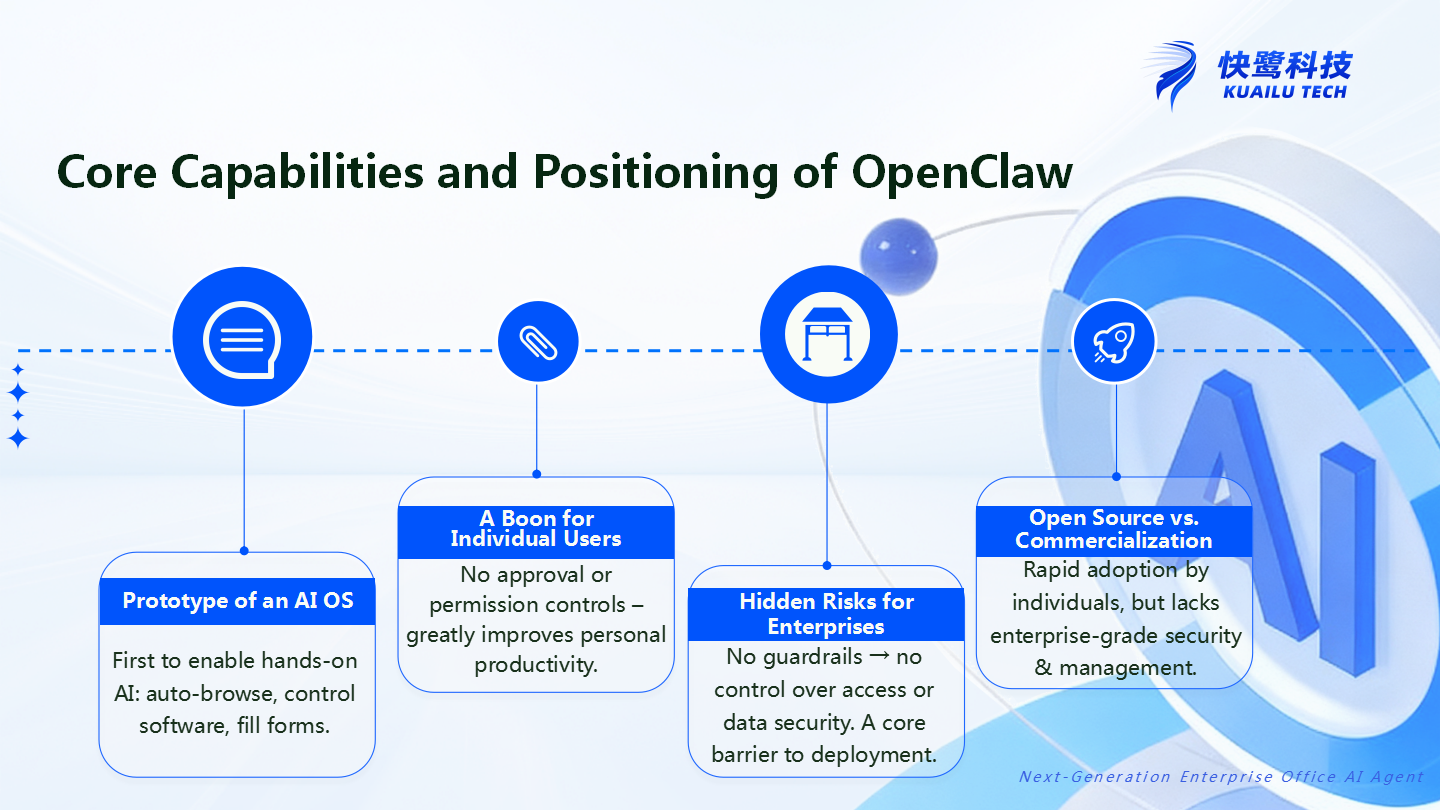

If large language models like ChatGPT are like “AI browsers” that let you see the intelligence of AI, then OpenClaw is like “the prototype of an AI operating system” —for the first time, it gives AI the ability to truly take action: automatically opening web pages, operating software, filling out forms, and executing tasks across systems.

Individual developers, tech enthusiasts, and productivity seekers are thrilled. They are running OpenClaw on their own computers, as if witnessing a “digital employee” working on their behalf.

But a contradiction emerges: Why are tools that individuals enjoy so much something enterprises are reluctant to use?

This is not a technical issue, but a more fundamental one: as AI evolves from “being able to talk” to “actually doing the work,” who provides the safety net for enterprises?

I. The Truth About OpenClaw: The Cost of Power Is “No Guardrails”

To understand this issue, we must first recognize the essence of OpenClaw.

For the past few years, the large language model applications we have grown accustomed to—whether ChatGPT or Claude—are essentially conversational AI. You ask, it answers, and it tells you what you want to hear. It can draft copy, write code, and propose solutions, but its capabilities remain at the output level; it cannot truly execute.

OpenClaw changes one thing: it gives AI the ability to take action for the first time.

You can tell it, “Help me check this month’s financial report,” and it will automatically open the financial system, enter the credentials, navigate to the report page, extract the data, organize it into a table, and return it to you. No human intervention is required throughout the process.

This is precisely why it is called “the prototype of an AI operating system” —it is not “answering,” but “operating.”

But behind this power lies a critical characteristic: OpenClaw is “unguarded.”

What does that mean?

Any skill, by any person, can be installed and executed. There is no approval process, no permission boundaries, no operational auditing. Its design philosophy is “maximizing capability” —giving AI as much freedom as possible to do more things.

For individual users, this design is a blessing. But for an enterprise, this is precisely a fatal problem.

Open-source experimentation does not mean enterprise-ready deployment.

II. What Enterprises Need Is Not “Ability to Work,” but “Confidence to Use”

Let’s look at this from another perspective.

Imagine you are an IT manager at a company. Now there is an AI tool that can automatically log into your financial system, approval system, and database, and perform various operations. However, it has no permission controls—any employee can ask it to do anything, all operations leave no trace, and there is no way to audit when something goes wrong.

Would you dare to connect it to your company’s core business?

The answer is almost certain: No.

This is the essential gap between OpenClaw and enterprise needs.

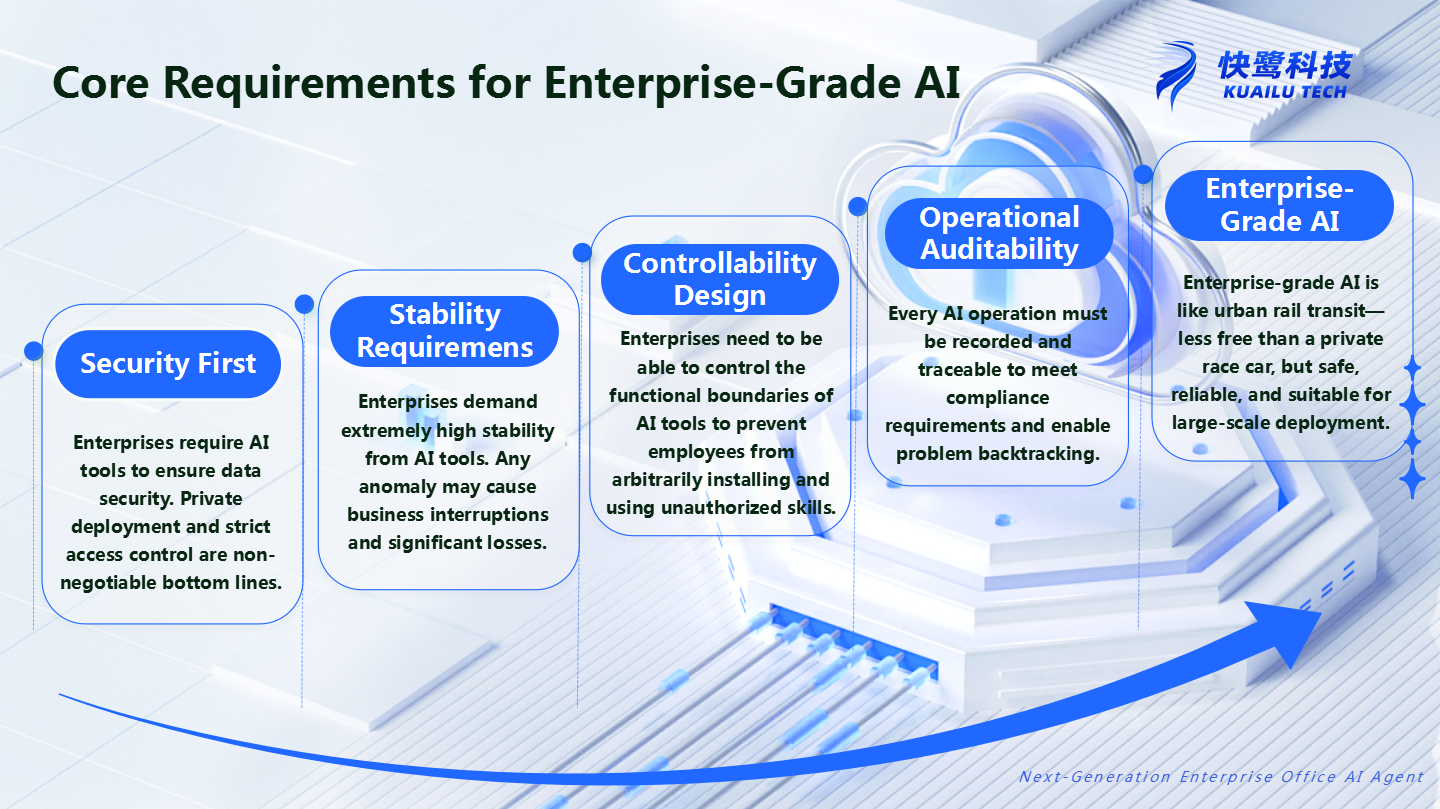

What enterprises need is never the “most capable AI,” but the “safest AI.” In other words, what enterprises need is not just “ability to work,” but “confidence to use, ability to use, and ease of use” —with “confidence to use” coming first.

So, what exactly does “confidence to use” require from an enterprise perspective?

- Security. Data must reside on the enterprise’s own servers, access rights must be centrally managed by the enterprise, and an AI tool must not create a channel for data leakage.

- Stability. Business operations must not be interrupted by anomalies in an AI tool. It requires predictable responsiveness and dependable availability, not a “sometimes smart, sometimes not” black box.

- Controllability. Employees cannot arbitrarily install unauthorized skills. There must be clear boundaries and approval processes for what each AI can and cannot do.

- Auditability. Every action must be traceable. When problems arise, there must be a way to backtrack and assign accountability. It cannot be that “no one knows what the AI did.”

Without these four cornerstones, AI is merely a “personal assistant.” With these four capabilities, it becomes a trustworthy “enterprise-grade AI.”

If personal AI tools are like private racing cars—fast and free to operate but without any safety constraints—then enterprise AI is like a city’s rail transit system: it has a control center, safety doors, surveillance, and emergency plans. It may not be as fast as a race car, but it is safe for everyone to ride.

III. The Essential Difference Between “No Guardrails” and “Guardrails in Place”

Comparing OpenClaw with enterprise-grade AI reveals the gap more clearly.

- Permission Control: Anyone Can Install vs. Approval-Based ListingIn OpenClaw’s ecosystem, anyone can install any skill without restrictions. This openness is an advantage for individuals but a disaster for enterprises.What enterprises need is a “skill store” mechanism—all skills must be approved by administrators before being listed. Employees can only use authorized skills and cannot install plugins arbitrarily. Every capability that enters the business workflow has been vetted.

- Data Security: Scattered Data vs. Private DeploymentWhen individuals use OpenClaw, data is scattered across various devices and cloud services, and users are responsible for security. But enterprises cannot accept this model.Core enterprise data—customer information, financial data, business reports—must reside on the enterprise’s own servers. Private deployment is not an “optional feature”; it is a baseline requirement.

- Operational Audit: Untraceable vs. Fully TraceableFor ordinary users, the process by which OpenClaw executes tasks is a “black box.” Users may not know what it did or how it did it.What enterprises need is a “white box” —every operation must be logged, every step must be traceable. When AI is authorized to perform sensitive operations such as financial payments or contract approvals, auditability is not a nice-to-have, but a necessity.

“No guardrails” enabled OpenClaw’s power, but it also determines that it cannot enter the core business processes of enterprises. It is not that it is not good enough; rather, its design was never intended to serve enterprises.

IV. The Direction Is Right, but the Path Must Be Rebuilt

So, does this mean OpenClaw is heading in the wrong direction?

Quite the opposite.

OpenClaw’s greatest value lies not in how polished it is, but in proving a direction: the path where “AI can do the work” is feasible.

It tells everyone in the most direct way: AI does not have to be confined to a dialog box. It can step out of conversation, into systems, and truly become a “digital employee” capable of executing tasks.

But getting the direction right does not mean the path can be directly reused.

Moving from personal tools to enterprise tools is not simply adding a layer of management interface on top of OpenClaw. It requires rethinking from the ground up: how to enable AI to maintain its “ability to work” while operating within guardrails?

This requires redesigning permission models, data isolation schemes, operational audit mechanisms, and skill approval workflows. It requires making AI both “safe to use” and “easy to use.” It requires embedding “security, stability, and controllability” into the product’s DNA, rather than treating them as after-the-fact patches.

Without Guardrails, Enterprises Dare Not Deploy AI in Core Business

The popularity of OpenClaw is a signal.

It tells us that AI is moving from “being able to talk” to “truly doing the work,” from “assistive tool” to “executing agent.” This is a paradigm shift in AI development, and everyone sees this direction.

But for enterprises, getting the direction right is only the first step.

The more powerful personal tools become, the more enterprises need guardrails. Not because enterprises are conservative, but because every operation in an enterprise touches data security, business processes, and compliance audits. Without guardrails, individuals may enjoy using AI, but enterprises dare not bring it into core business.

OpenClaw has proven that “AI can do the work.” But what enterprises need is not AI that “can do the work,” but AI they “dare to use.”

The distance between the two is not technology—it is guardrails.